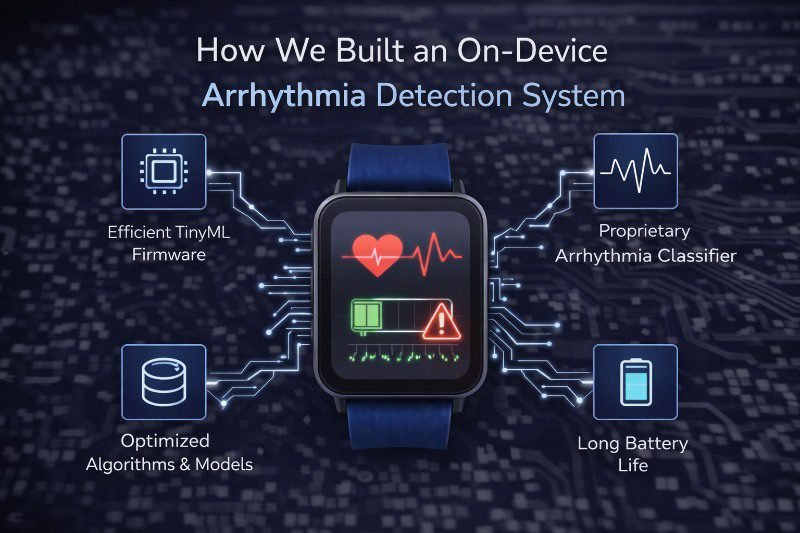

When we set out to build an on-device arrhythmia detection system, innovation was never the headline goal.

Reliability was. We weren’t trying to prove that AI could run on tiny hardware. We were solving a far more grounded and urgent problem:

How do you deliver life-saving cardiac intelligence on a chip with less RAM than a smartwatch face without cloud dependency, without latency, and without failure?

Because in emergency medicine, “almost real time” is still too slow. And “we’ll sync it later” is not an acceptable answer.

Why Cloud-Based Diagnostics Fall Short in Real Care Settings

Many cardiac monitoring solutions rely on cloud infrastructure for analysis. In controlled environments, that works. In real life, it often doesn’t.

Ambulances lose connectivity. Rural clinics don’t have reliable bandwidth. Home-care environments depend on inconsistent networks. For arrhythmia detection, latency is not just a performance metric; it’s a clinical risk.

A system that detects atrial fibrillation or ventricular abnormalities seconds too late may miss the window for intervention. That reality made our architectural decision straightforward:

All detections had to happen on the device.

No cloud inference. No round-trip delays. No dependency on external infrastructure.

The Constraints Were Brutal and Non-Negotiable

From the outset, Medical Device Hardware Design defined our boundaries.

We were working with:

- A Cortex-M4 MCU

- Extremely limited RAM and flash

- Strict power budgets for continuous operation

- Real-time ECG sampling requirements

There was no GPU. No neural accelerator. No margin for inefficiency. Every byte mattered. Every millisecond mattered. Every microamp mattered.

This was not a problem we could solve by “making the model smaller” alone. It required rethinking the entire system from signal acquisition to firmware scheduling to inference execution.

Rethinking the Stack from the Ground Up

Rather than starting with an ML model and forcing it onto embedded hardware, we reversed the process. We designed the system backward from the constraints.

That meant rebuilding the stack across three tightly coupled layers:

- Hardware-aware signal acquisition

- Power-efficient firmware architecture

- Ultra-compact, deterministic ML inference

This is where Hardware Firmware Development becomes a systems discipline, not just an implementation task.

Making TinyML Clinically Viable

Our original arrhythmia detection model performed well on larger systems but was unsuitable for embedded deployment.

Instead of blindly compromising accuracy, we focused on preserving clinical signal integrity while aggressively reducing computational cost.

Key steps included:

- Quantizing the model to sub-8-bit weights without degrading the F1 score

- Eliminating redundant layers and operations

- Optimizing feature extraction to reduce runtime complexity

- Designing inference paths for fixed-point arithmetic

The result:

- The full inference pipeline fits within a ~120KB footprint.

- Deterministic execution with predictable latency

- Clinically meaningful detection accuracy preserved

This was not about shrinking AI for novelty. It was about making intelligence accountable at the point of care.

Signal Processing Was as Critical as the Model

In cardiac diagnostics, raw ECG data is messy. Motion artifacts, electrode impedance changes, and environmental noise can easily overwhelm naïve ML models, especially on constrained devices.

We built a real-time ECG preprocessing pipeline that:

- Filtered baseline wander and high-frequency noise

- Normalized signals dynamically

- Segmented PQRST complexes accurately at 200Hz

All preprocessing ran locally, before inference.

This approach ensured that the TinyML model focused on physiologically relevant features instead of compensating for noise. In embedded medical systems, good signal conditioning often matters more than model depth.

Firmware Was the Backbone of the System

None of this would have worked without disciplined Firmware Development Services.

The firmware was responsible for orchestrating:

- Continuous ECG sampling

- Real-time preprocessing

- ML inference execution

- Alert generation

- Power management

All under strict timing and energy constraints.

Key firmware optimizations included:

- Event-driven task execution instead of polling loops

- DMA-based data transfers to minimize CPU load

- Aggressive use of sleep and stop modes between acquisition windows

- Precise timer configuration to maintain 200Hz sampling without drift

The result was a system that delivered:

- <50ms inference latency

- <5mA average current draw

- Continuous operation without thermal or stability issues

Firmware wasn’t just glue code; it was the control layer that made the system safe, responsive, and reliable.

Power Efficiency Was a Safety Requirement

In medical wearables, battery life is not a convenience feature. It is a clinical requirement.

A drained battery means:

- Missed arrhythmias

- Loss of diagnostic continuity

- Compromised patient trust

We treated power as a first-class safety constraint rather than an optimization goal. By aligning hardware capabilities with firmware scheduling and ML execution windows, we ensured the device could run continuously for extended periods without sacrificing detection of fidelity.

This balance is only achievable when Medical Device Hardware Design and firmware are developed as a single system.

Why On-Device Detection Changes Clinical Outcomes

The finished system could:

- Detect arrhythmic patterns in real time

- Analyze PQRST morphology locally

- Trigger alerts immediately without cloud access

This matters because care doesn’t happen in data centers. It happens to patients.

In an ambulance, a village clinic, or a home-care setup, there is no time to sync, upload, or wait. You only have time to detect, decide, and intervene.

This isn’t Edge vs Cloud, it’s Responsibility Placement

This project reinforced an important lesson:

The debate is not “edge vs cloud.”

The real question is: Where must intelligence lives to protect the patient?

For arrhythmia detection, the answer is clear: on the device.

Cloud analytics still play a role in long-term monitoring and population insights. But first-line detection must be immediate, deterministic, and independent. Embedded intelligence makes that possible.

Scaling MedTech Requires Embedded Accountability

When AI runs in the cloud, responsibility is abstracted. When AI runs on-device, responsibility is embedded.

Every inference must be:

- Predictable

- Explainable

- Power-aware

- Safe under failure

This fusion of edge engineering and clinical responsibility enables MedTech solutions to scale beyond controlled environments.

It’s not just about low-power AI. It’s about trustworthy intelligence, placed where it matters most.

Final Thoughts

Building an on-device arrhythmia detection system forced us to confront the realities of medical engineering’s tight constraints, zero tolerance for failure, and the need for absolute reliability.

At Pinetics, this is the mindset we bring to every engagement. With deep expertise in Medical Device Hardware Design, Firmware Development Services, and Hardware-Firmware Development, we help MedTech innovators build systems that deliver real clinical value under real-world conditions.

When intelligence becomes ambient, embedded, and accountable, MedTech doesn’t just innovate; it becomes accountable. It saves lives.

Sr. Test Engineer

Sr. Test Engineer Sales Marketing Manager

Sales Marketing Manager Marketing & Sales – BBA : Fresher

Marketing & Sales – BBA : Fresher